Media, Society, and Culture Lesson Module

Seeing Isn’t Believing

RepresentUs, CC BY 3.0 <https://creativecommons.org/licenses/by/3.0>, via Wikimedia Commons: A synthetic Kim Jong Un warns Americans—evidence that our eyes/ears are no longer reliable gatekeepers.

This video clip is a "deepfake" of Kim Jong Un created in 2020 by RepresentUs, a non-partisan non-profit organization that produced it to raise public awareness about fake news in the emerging age of generative AI. The footage itself is fully synthetic, yet it’s an amalgamation from genuine video footage and voice samples of the North Korean leader drawn from publicly available news archives. It was intentionally designed to look real enough to unsettle us, but still recognizable as “fake” for educational purposes. Since 2020, of course, deepfake technology has advanced by orders of magnitude, in terms of realism, accessibility, and speed of production.

Today, we’ll be surveying the phenomenon of fake news: clarifying what the term actually means, tracing its historical trajectories, mapping its online growth, examining how it spreads, and considering its broader implications for media, politics, and public trust.

1) What “Fake News” Is (and Isn’t)

“The Fake News losers at CNN immediately tried to fact check it, but President Trump was right (as usual).” - The White House. Archived March 6, 2025.We will treat this snippet from the White House's official news release from March 5, 2025 as a discursive example: the label “fake news” is now a political buzzword, applied to disliked coverage or even verified information that conflicts with our priors. To keep the concept analytically useful, we’ll reserve it forcounterfeit journalism: content that mimics the form of news without its fact-seeking processes, often scaled by manipulative distribution.

A three-layer understanding of fake news:

1. Content-level: fabricated or grossly deceptive claims presented as fact (e.g., synthetic media, conspiracy copy).

2. Source-level: outlets with persistent deception, undisclosed funding/automation, or serial policy violations.

3. Distribution manipulation: bots, troll farms, and coordinated networks that simulate consensus and virality.

- When you’ve seen “fake news” used as a label, which layer (content, source, distribution) was actually at issue?

- What visible cues do you rely on for credibility? Which of those cues are easiest to counterfeit?

2) A Short History of Fake News

Source: New York Journal front page, March 25, 1898. Library of Congress, Washington, D.C.

In World War I, the United States built a modern war propaganda apparatus, the Committee on Public Information (the “Creel Committee”), to saturate the public sphere with persuasive messaging (read more: Public Relations: Industry, Practices, and Democratic Implications).

Media panics also have a history: the infamous “mass panic” over Orson Welles’s 1938 War of the Worlds broadcast was real for some listeners but widely exaggerated by rival newspapers.

During the Cold War, the KGB seeded Operation Denver/INFEKTION, a disinformation campaign alleging the U.S. invented HIV/AIDS, one of the many Cold War templates for transnational conspiracy diffusion.

3) The Growth of Online Fake News

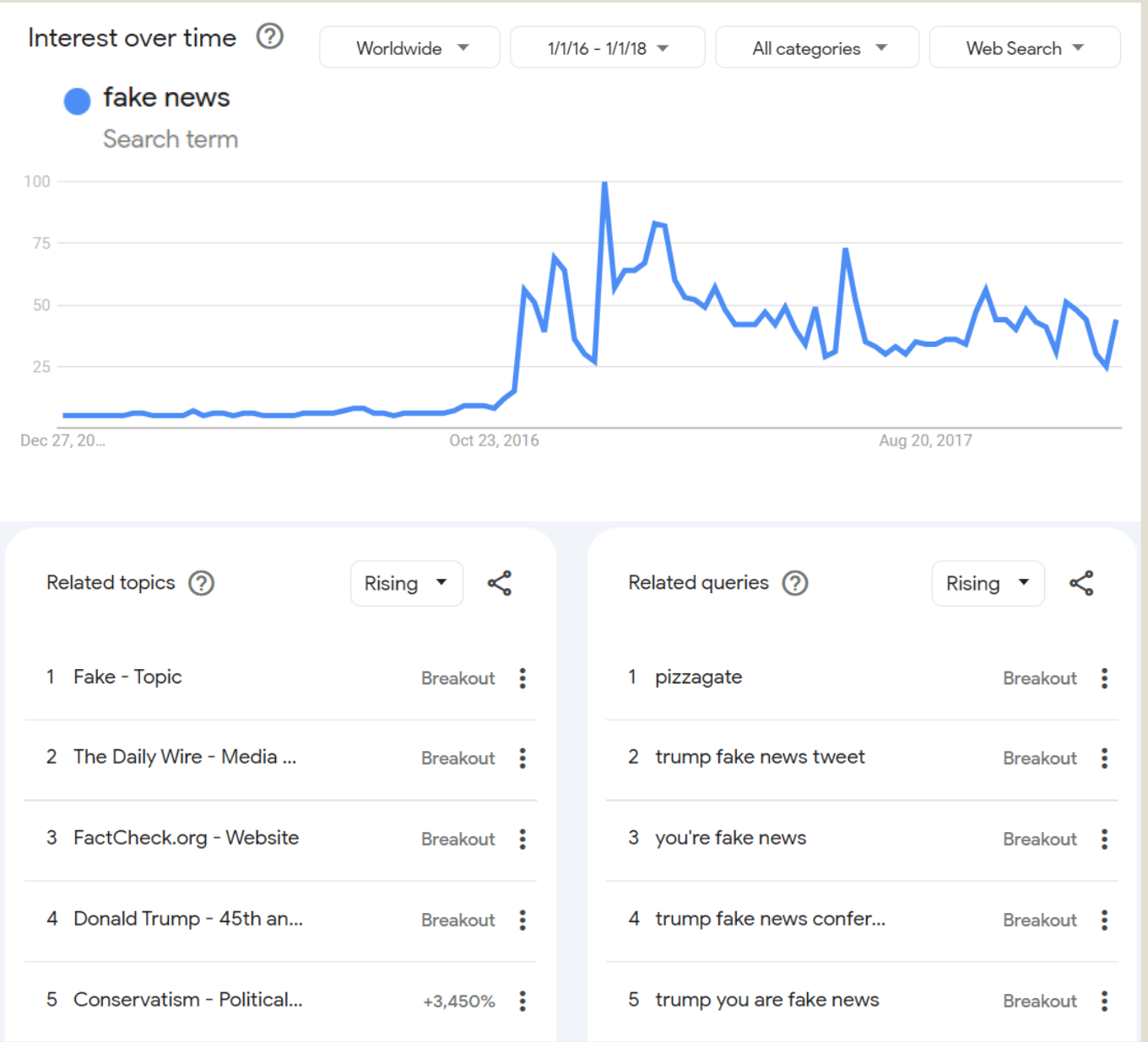

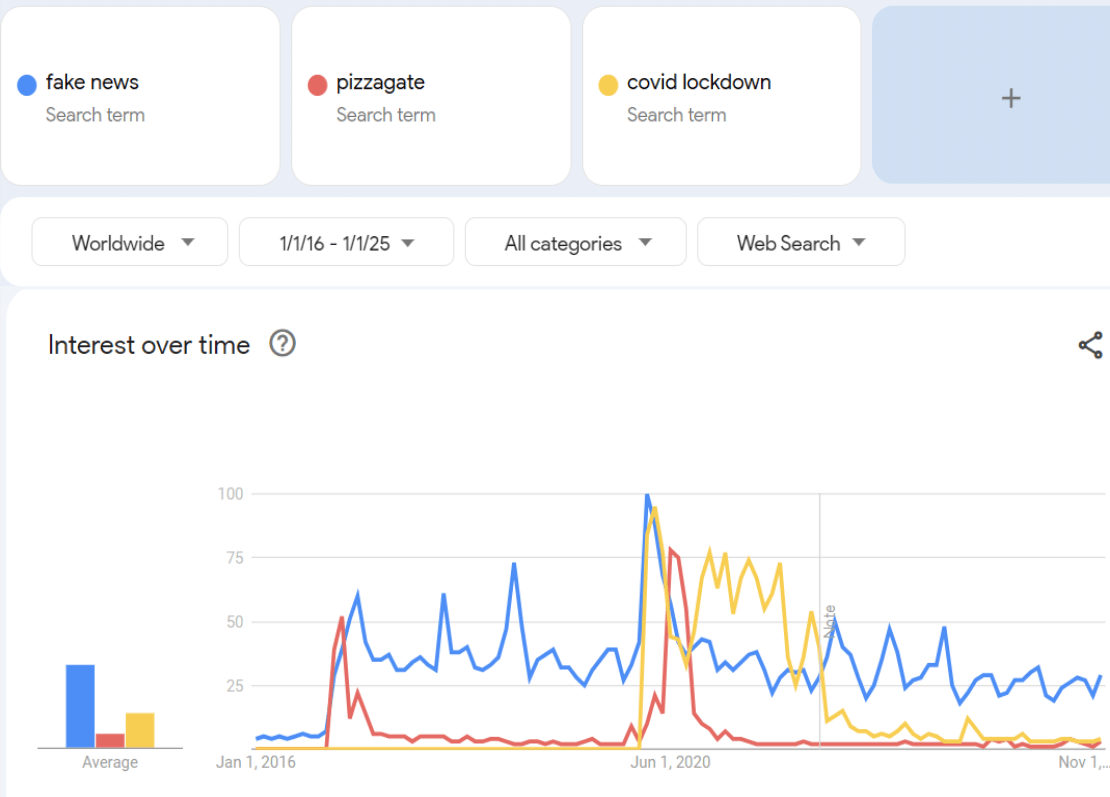

We now live in a media ecosystem where fake news is becoming ubiquitous. It is also increasingly difficult to distinguish legitimate journalism from fake news. How did we get here?Search interest in “fake news” rises with catalytic events. Google Trends visuals below highlight the 2016–2018 surge of “fake news” search term as the phenomenon enters mainstream discourse.

These patterns highlights how moments when uncertainty and identity politics catalyze misinformation while digital platforms provide the fuel with rising topics/queries.

Since 2016, many fake news outlets have achieved remarkable success in building audiences and expanding subscriptions by promoting viral, partisan news content across social media platforms. A notable example is The Epoch Times, which originated in 2000 as a Falun Gong–operatednewsletter associated with the controversial new religious movement (NRM). Over time, it evolved into a major digital publisher that aggressively circulated partisan conspiracy narratives—such as “Spygate,” "QAnon," and “COVID-19 bioweapon" conspiracy—while using targeted platform advertising to grow its subscriber base.

4) How Fake News Spreads

Bots and early amplification

Shao, Chengcheng, Giovanni Luca Ciampaglia, Onur Varol, Alessandro Flammini, and Filippo Menczer. "The spread of fake news by social bots." arXiv preprint arXiv:1707.07592 96, no. 104 (2017): 14.

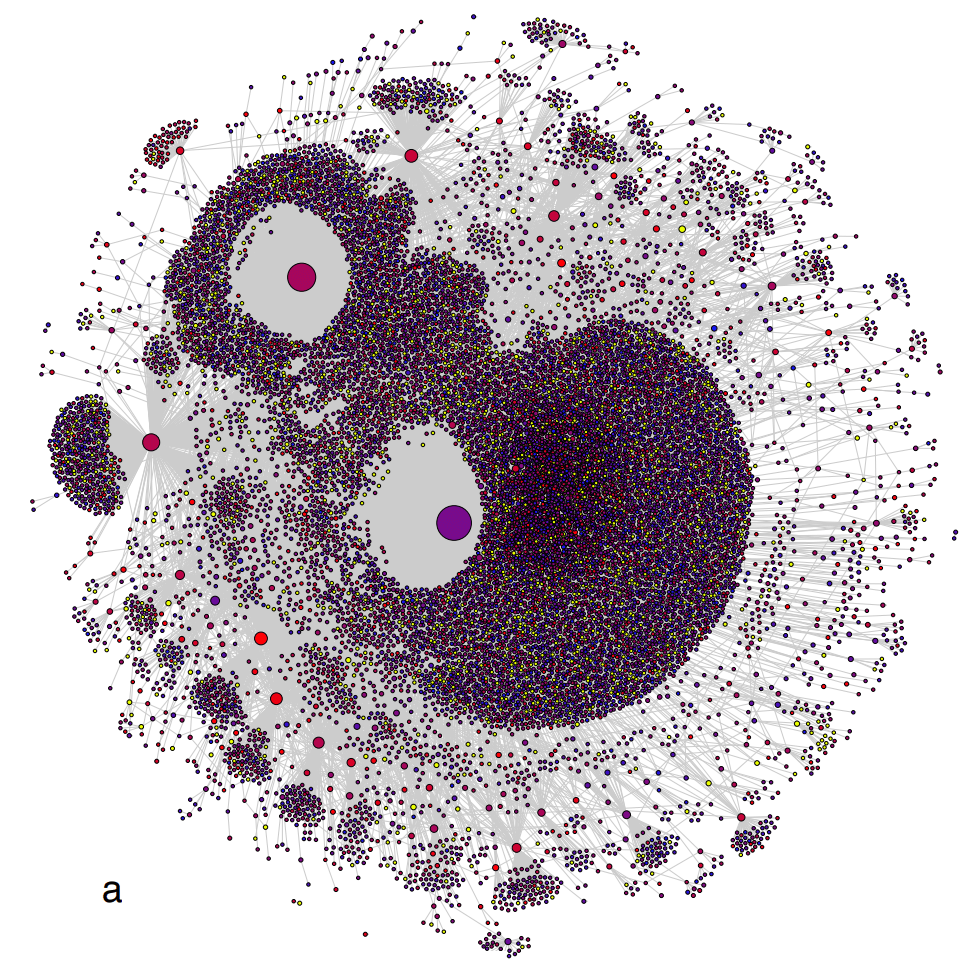

Similar automated fake news dissemination pattern has been observed in another analysis conduced in 2023 by Albert Orozco Camacho (McGill University):

These cases align with platform-level research suggesting substantial automated and coordinated sharing of fake news content in our media ecosystem.

Echo Chambers and Algorithmic Rhetoric

When polarized communication networks fragment into relatively insular clusters that are internally homogeneous in both ideology and rhetoric, they are referred to as echo chambers.

Algorithms also play a central role in sustaining these chambers. Most social media platforms rely on engagement-driven recommendation system that privilege content likely to be clicked, shared, or commented on. Over time, these algorithmic feedback loops would self-optimize to deliver material that resonates with users’ pre-existing tastes, intensifying the affective appeal of familiar narratives while quietly filtering out dissonant ones.Thus, Algorithms also function rhetorically: they perform acts of invention (heurēsis) by curating what enters the field of public attention; they enact style and delivery by determining the rhythm and prominence of posts in a user’s feed; and they amplify identification by clustering like-minded publics into algorithmically attuned communities.

The phenomenon of echo chamber has been well observed and documented:

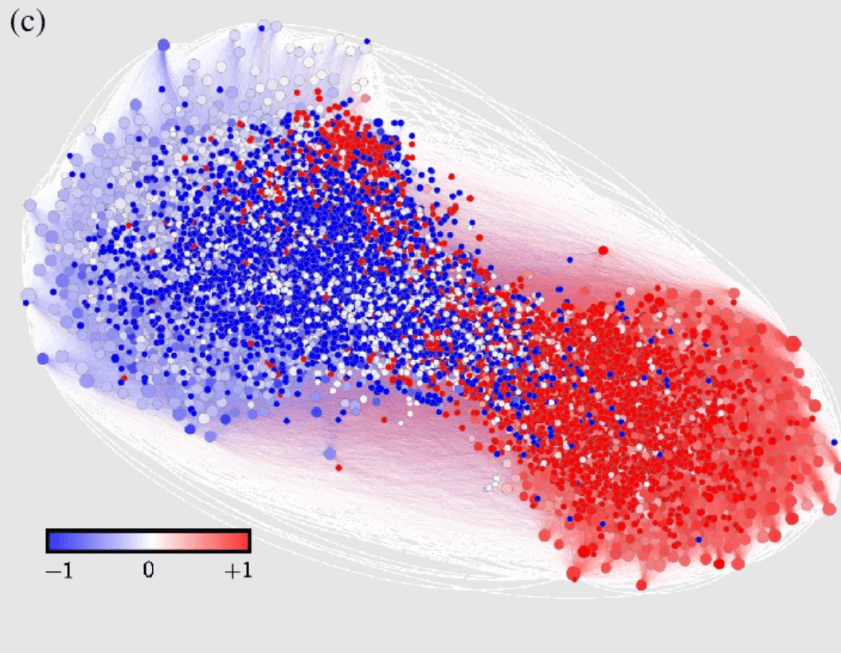

Cota, W., Ferreira, S.C., Pastor-Satorras, R. et al. Quantifying echo chamber effects in information spreading over political communication networks. EPJ Data Sci. 8, 35 (2019). https://doi.org/10.1140/epjds/s13688-019-0213-9

In the above visualization of the Brazilian “impeach Dilma Rousseff (former Brazilian President) debate” communication network, pro- and anti-impeachment users formed two well-separated clusters; the ability to reach large diverse audiences depended on position within (and bridges across) these chambers. Within these echo chambers, congruent content spreads with less friction, while corrective information struggles to cross cluster boundaries, thereby amplifying confidence without improving accuracy.

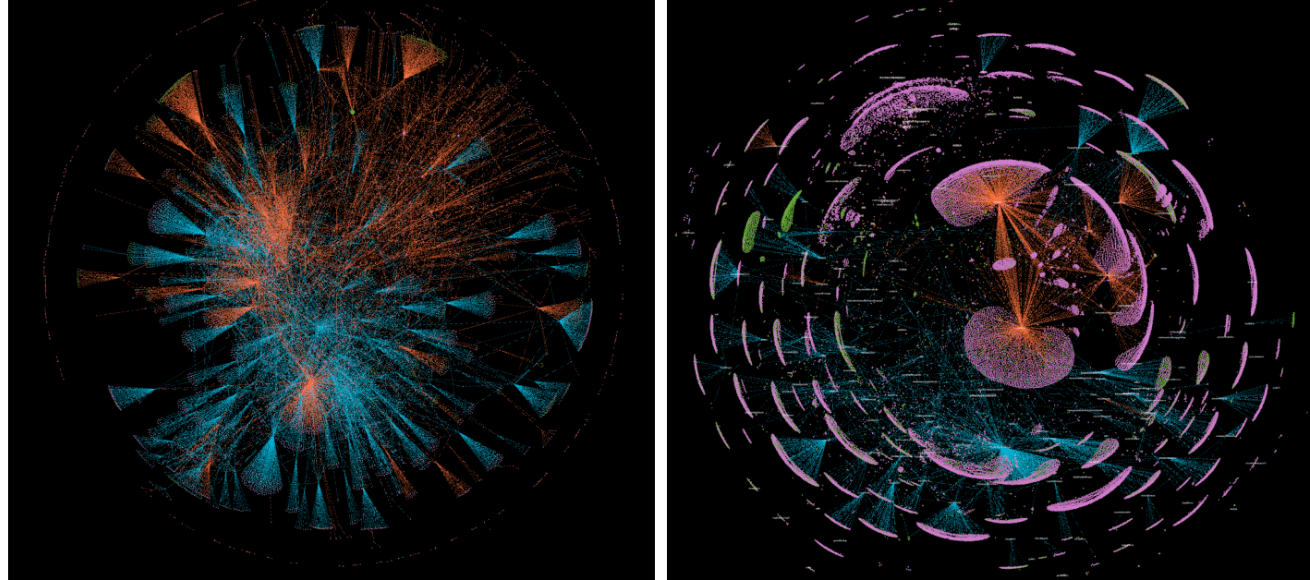

Just like social bots, tightly connected “echo chamber” communities can early bootstrap fake news’ reach. In the following data visualization by Gilad Lotan, Head of Data Science & Analytics @BuzzFeed shows initial user groups who shared a viral fake-news video “The Truth About Hillary’s Bizarre Behavior” in 2016 (now has over 7.5M views):

5) 2018 Knight Foundation Study & Policy Implications

The 2018 Knight Foundation report by Hindman M. and Barash V. represents one of the most comprehensive analyses conducted on the diffusion of fake news across Twitter, examining its patterns both during and in the aftermath of the 2016 U.S. election.Key takeaway

The researchers found that the disinformation ecosystem was not driven by innumerable fringe sites but by a dense, automated supercluster of high-impact accounts whose activity shaped the overall information environment. In this view, meaningful mitigation depends less on chasing individual stories and more on targeting the dominant hubs of distribution and the automated systems that sustain them.

The study also revealed clear partisan and international dynamics. During the 2016 U.S. election, left-leaning disinformation networks diminished sharply after the vote, while right-wing and Russia-aligned clusters remained active and influential. Before the election, Russian-affiliated accounts often served as brokers, linking U.S. conservative circles to European far-right networks. Afterward, this role shifted to transnational conspiracy communities that maintained cross-platform amplification of anti-establishment narratives.

Many of the most viral stories did not spread organically. Instead, coordinated blocks of accounts strategically extended their visibility, functioning as what the report termed “information-warfare air cover” for pre-existing ideological narratives. Between 2016 and 2018, over 80% of disinformation accounts identified during the election remained active, collectively generating nearly one million tweets per day. Just ten sites were responsible for roughly 65% of all fake-news links, while the top 50 sites accounted for almost 90%, a concentration that remained stable months later.

Automation was also decisive: approximately 63% of the sampled accounts, and likely up to 70% overall, were either automated (bots) or semi-automated users that could generate and circulate content at scale.

Policy Implications and Platform Responsibility

The 2018 Knight Foundation report also highlighted significant enforcement failures. More than four-fifths of the fake-news–linked Twitter accounts from the 2016 election were still active two years later. The concentration of misinformation sources remained virtually unchanged, with the top fifty sites continuing to generate nearly ninety percent of the overall fake-news traffic. Bots and semi-automated accounts were central to this activity—up to seventy percent of the accounts in the dataset were estimated to be automated or partially automated.

Yet the report demonstrated that certain stopgap interventions could significantly curb disinformation. The authors recommended challenge–response authentication measures (e.g., CAPTCHA verification) for political and news-related accounts, alongside bot labeling and the exclusion of automated accounts from follower and engagement metrics. These adjustments would reduce the algorithmic visibility and perceived legitimacy of fake-news amplification networks.

Finally, the researchers emphasized targeted interventions: focusing enforcement on the most influential fake-news domains rather than dispersing resources across countless minor actors. When Twitter suspended The Real Strategy (one of the top-performing fake-news outlets at the time), mentions and links to the site dropped by 99.8%. This example illustrated how focusing on a small number of dominant actors and their automation infrastructure can yield disproportionate benefits for information integrity across the entire network.

-

What policy risks or unintended consequences might arise from aggressive bot labeling or mandatory CAPTCHA verification for political accounts?

-

Should social media companies be treated as neutral intermediaries or as rhetorical actors with civic responsibilities in the digital public sphere? How might this distinction affect the legitimacy of platform interventions?

References

-

- Tandoc Jr., Edson C., Zheng Wei Lim, and Richard Ling. “Defining ‘Fake News’: A Typology of Scholarly Definitions.” Digital Journalism 6, no. 2 (2018).

- Fallis, Don, and Kay Mathiesen. “Fake News Is Counterfeit News.” Inquiry (2019).

- Library of Congress. “The Spanish–American War and the Yellow Press.” 2024.

- Library of Congress. “Belief, Legend, and the Great Moon Hoax.” Folklife Today.

- Vida, István Kornél. "The" Great Moon Hoax" of 1835." Hungarian Journal of English and American Studies (HJEAS) (2012): 431-441.

- Creel, George. How we advertised America: The first telling of the amazing story of the Committee on Public Information that carried the gospel of Americanism to every corner of the globe. Harper & brothers, 1920.

- Battles, Kathleen, and Joy Elizabeth Hayes. "The enduring significance of The War of the Worlds as broadcast event." In The Routledge Companion to Radio and Podcast Studies, pp. 217-225. Routledge, 2022.

- Wilson Center. “Operation Denver: KGB and Stasi Disinformation Regarding AIDS.” Cold War Archives, 2019.

- Gold, Michael. “How Shen Yun and The Epoch Times Became a Mega-Influence Machine.” The New York Times, December 30, 2024. https://www.nytimes.com/2024/12/30/nyregion/shen-yun-epoch-times-falun-gong.html

- Associated Press. “What Will Become of The Epoch Times with Its CFO Accused of Money Laundering?” June 2024. https://apnews.com/article/epoch-times-conservative-trump-money-laundering-543d184d378e570683e7d10326973240

- Shao, Chengcheng, Giovanni Luca Ciampaglia, Onur Varol, Alessandro Flammini, and Filippo Menczer. “The Spread of Low-Credibility Content by Social Bots.” arXiv (2017) arXiv:1707.07592.

- Zimmer, F., Scheibe, K., Stock, M. and Stock, W.G., 2019, January. Echo chambers and filter bubbles of fake news in social media. Man-made or produced by algorithms. In 8th annual arts, humanities, social sciences & education conference (pp. 1-22).

- Cota, Wesley, Silvio C. Ferreira, Romualdo Pastor-Satorras, and Mikko Starnini. “Quantifying Echo Chamber Effects in Information Spreading over Political Communication Networks.” EPJ Data Science 8 (2019): 35. https://doi.org/10.1140/epjds/s13688-019-0213-9.

- Wang, Zhaozhe. "Post-rhetoric: A rhetorical profile of the generative artificial intelligence chatbot." Rhetoric Review 43, no. 3 (2024): 155-172.

- Reyman, Jessica. "The rhetorical agency of algorithms." In Theorizing digital rhetoric, pp. 112-125. Routledge, 2017.

- Wang, Keren. "Legal and Ritological Dynamics of Personalized “Pillars of Shame” in Chinese Social Credit System Construction." China review 24, no. 3 (2024): 179-206. https://www.jstor.org/stable/48788933

- Orozco Camacho, Albert. A Study of Social Media Trolls via Graph Representation Learning. Master’s thesis, McGill University, 2023. https://escholarship.mcgill.ca/concern/theses/j3860d25r.

- Woolley, Samuel C. "Automating power: Social bot interference in global politics." First Monday (2016).

- Hindman, Matthew, and Vlad Barash. Disinformation, ‘Fake News,’ and Influence Campaigns on Twitter. Washington, DC: Knight Foundation, 2018. https://knightfoundation.org/features/misinfo/.