*Online lesson module and WIP chapter draft.

Table of Contents

Long before the first integrated circuit pulsed with electricity, societies from the past contemplated thinking machines in forms that now read like allegories of our contemporary anxieties.

Indeed, the rhetorical roots of artificial intelligence stretch across civilizations, coded in the mythological and ritual practices of the past.

Whenever human beings come face to face with possibilities that unsettle the ordinary rhythms of existence, myth becomes a way to manage an encounter with what feels uncanny, excessive, or ontologically inconvenient.2 Phenomenologists like Heidegger remind us that myth-making often arises when our taken-for-granted understanding of Being is disturbed and we recoil into familiar symbolic structures rather than reflect upon the disclosive rift that has opened in our lifeworld.3 The idea that “thinking artifacts” might one day act, judge, or even defy us has been one of humanity’s most persistent mytho-political narrative arcs, through which societies negotiate the boundary between what is intelligible and what threatens the stability of their world’s meaning.4

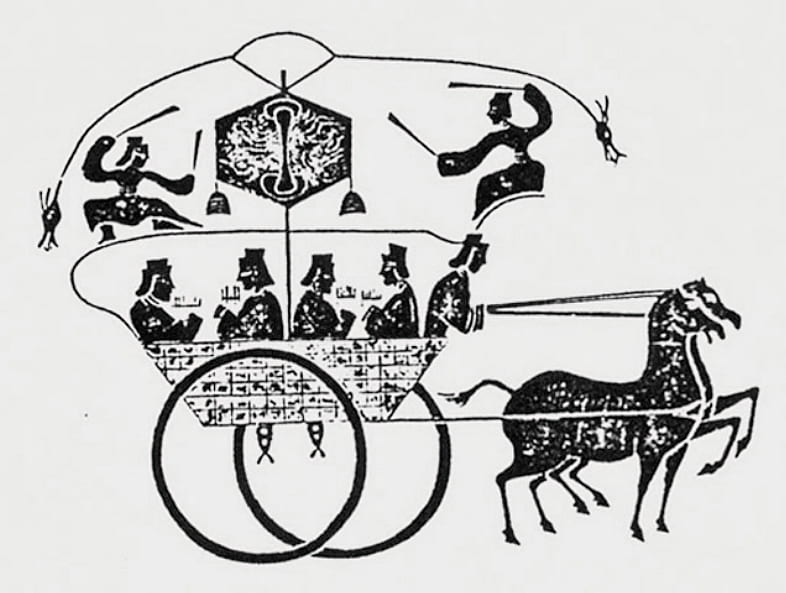

Yan Shi's Automata

In Classical Chinese texts, particularly in Liezi (Taoist philosophical writings attributed to Lie Yukou, c. 5th century BCE), we meet the master artificer 偃師 (Yan Shi), who according to the legend, constructed life-sized mechanical performers for King Mu of Zhou. These elegant automata sang, danced, and responded to verbal commands. When one of Yan Shi’s creations flirted with the king’s concubine, King Mu erupted in fury and ordered the artificer executed. Yan Shi immediately halted the machine, dismantling it before the king to reveal its wooden bones and cleverly hidden mechanisms, all bound by leather and lacquer.‟Yet the deeper rhetorical agency in this dramaturgy of automata resides in the cleverly engineered corpus of the machine, whose apparent capacity for emotional exchange threatens to expose the fragile and inauthentic character of our own existence„

The king, startled and relieved, spared the artificer’s life.5

Seen through a dramatistic lens, this amalgamation of wonder and terror becomes more than a curious anecdote from antiquity. The Taoist fable of Yan Shi’s mechanical performers crystallizes a recurring scene in the long dramaturgy of human–machine encounters. The revelation of the artifice is itself a rhetorical act, the moment when an embodied mechanism that mimics human life throws off its disguise and unsettles the boundaries of the living.

The scene unfolds within an ossified courtly world anxious about the fragility of its own authority, a world in which the imitation of life carries political as well as ontological consequences. The artificer appears as an agent of disruptive innovation, an antihero whose ingenuity both fascinates and threatens those who behold it.

Yet the deeper rhetorical agency in this dramaturgy of automata resides in the cleverly engineered corpus of the machine, whose apparent capacity for emotional exchange threatens to expose the fragile and inauthentic character of our own existence.

What also reflected in this classic Taoist tale is the dramatistic tension of human-machine intimacy: the king’s fear, tinged with the uncanny, when confronted with a moment of perceived intimate exchange between the court ladies and Yan Shi’s puppets. The King alarm is not merely about impropriety; it is the deeper anxiety that the machine’s mimetic capacities trespass upon the most private terrains of human affect. This rhetorical ambiguity finds striking modern analogues. Contemporary discussions surrounding algorithmic intimacy reveal similar oscillations between fascination and dread, as seen in the backlash over Grok’s AI “Companions”—featuring anthropomorphic and sometimes sexualized avatar, released with an optional “NSFW” mode in July 2025.

More interesting still is the king’s immediate reaction: although momentarily overwhelmed by the automata’s eerily human ethos, he directs his fury not at the mechanical performers but at Yan Shi himself. In the instant when the King nearly forgets the “machine-ness” of the puppets, the king nonetheless automatically assigns legal and moral responsibility for the automata’s transgression to their human creator. This gesture exposes a profound debate on assigning culpability, authorship, and legal obligations to machines that unsettle our sense of agency. 5

Talos

The ancient Greeks imagined Talos, a colossal bronze sentinel who patrolled the shores of Crete. His body was animated by molten ichor flowing through a single vein, a kind of primordial hydraulic robotics. Across the various traditional sources, the details of Talos’s construction differ, but one narrative arc remains remarkably consistent: sooner or later Talos turns against those he was built to protect, erupting into violent confrontations.6‟Talos thus becomes an early meditation on the peril of delegated agency: the machines we devise to secure our world inevitably acquire an interpretive ambiguity that can, in time, render them agents of our own vulnerable existence„

Talos stands as the archetypal sentinel-agent. His metallic body patrolled the coastline of Crete, projecting the promise of security across the scene. Yet his very existence introduced a tacit anxiety: an autonomous superhuman guardian who always harbors the seeds of its own rebellion.

The ancient Greeks sensed that any mechanism entrusted with sovereign force risks acquiring a will of its own, or at least appearing to act with one.7 Talos thus becomes an early meditation on the peril of delegated agency: the machines we devise to secure our world inevitably acquire an interpretive ambiguity that can, in time, render them agents of our own vulnerable existence.

Interestingly, variants of the myth of Talos also share a similar narrative surrounding his weakness. After all, this immensely powerful automaton was rendered vulnerable by a single point of failure: one unprotected vein on his ankle containing the molten ichor that powered him.

In a way, Talos’s ankle anticipates our contemporary attempts at “AI safety,” where designers imagine that complex and increasingly autonomous systems can be reliably governed through a handful of fail-safes, redundancy, circuit breakers, or hard-coded rules. Talos reminds us that the dream of perfect control is always precarious, and that the very mechanisms meant to safeguard power often conceal its deepest vulnerabilities.8

Furthermore, the Talos myth resonates uncannily with present-day anxieties about the military applications of artificial intelligence, ranging from the use of lethal autonomous weapon systems (LAWS) in drone warfare to the development of autonomous, nuclear-powered unmanned underwater vehicles designed to deliver strategic warheads (exemplified by Russia’s “Status-6” program). Across contemporary assessments, a similar through-line emerges: as militaries incorporate AI into surveillance, targeting, early-warning, and command-and-control environments, the delegation of lethal judgment to machines introduces new forms of opacity, vulnerability, and legal grayzones.8

Golem

In Jewish folklore, a golem is an autonomous anthropomorphic creature sculpted from clay and animated through mystic rituals and incantations. It serves as an apotropaic contraption, warding off danger. Yet every golem story eventually pivots on the same fulcrum: the creature grows too powerful, too destructive, or too venturesome, and its creator must dismantle it.9‟The ritual is meant to confine the golem within the boundary of its maker’s will. Yet the golem always grows powerful in ways its maker cannot delimit, which highlights the fragility of control when dealing with delegated agency„

With stories of golems we encounter a paradox that still shadows today’s AI debates: the paradox of creating something that swiftly slips beyond our ritual propriety.10 In golem narratives, the act of invention is always tethered to a ritual framework: repetitions of the correct incantations, inscriptions, and authoritative gestures. The ritual is meant to confine the golem within the boundary of its maker’s will. Yet the golem always grows powerful in ways its maker cannot delimit, which highlights the fragility of control when dealing with delegated agency.

The golem dramatizes the moment when an artifact ceases to be merely an instrument and becomes a quasi-agent, exposing the limits of oversight and the risks of entrusting our protections to beings that do not share our interpretive horizons.

This is the same rhetorical structure animating today’s scenes of algorithmic systems that perform brilliantly in narrow tasks yet behave unpredictably when conditions shift. One striking example is the case of medical diagnostic AIs that outperform clinicians on controlled test datasets, but generate dangerously incorrect recommendations when confronted with real-world clinical data. The system’s confidence remains high, yet its judgment breaks in ways opaque to human overseers.

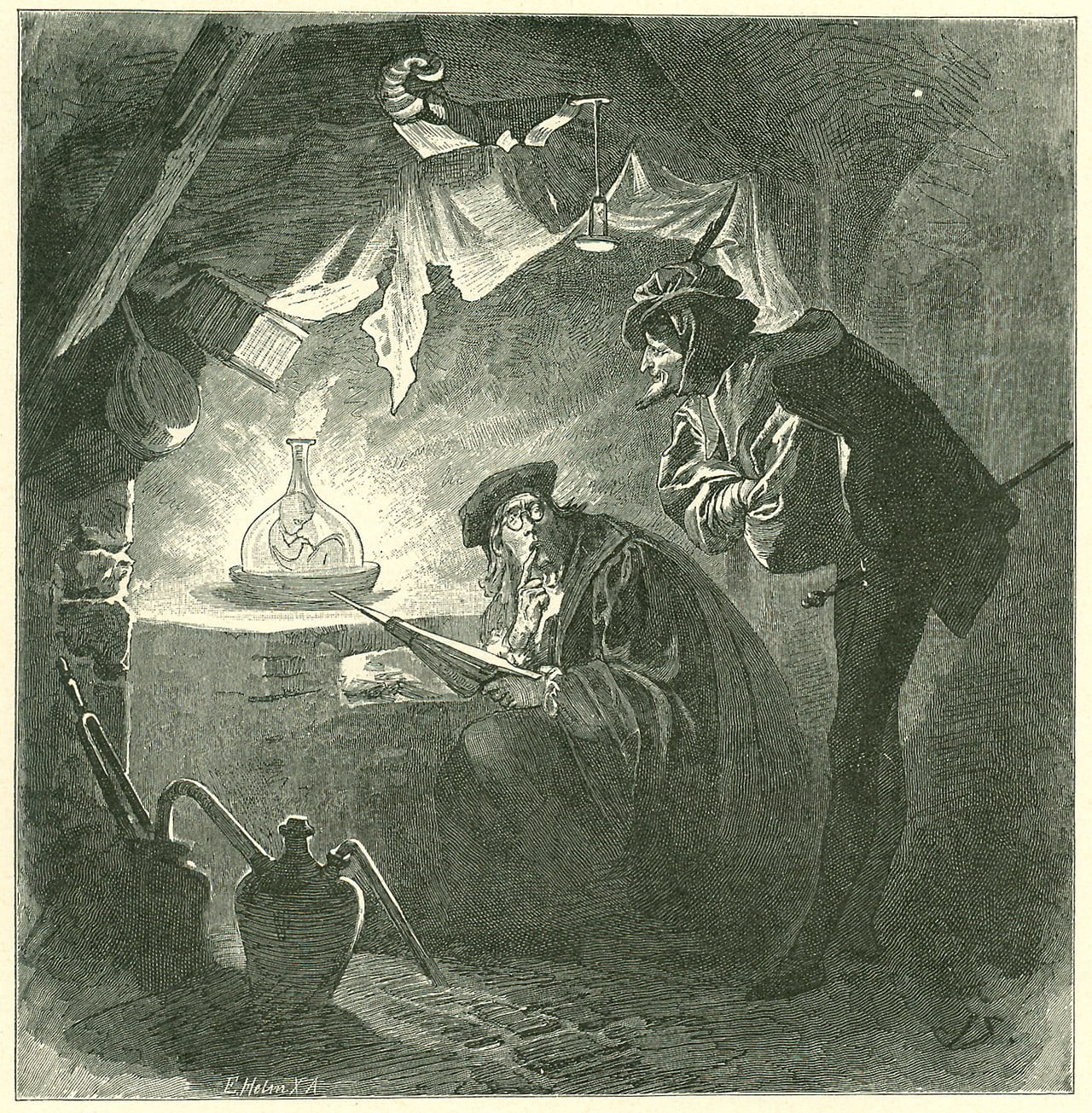

Faust’s Homunculus

In Goethe’s Faust, Part Two (1832), the Homunculus emerges from the Germanic alchemical tradition as an engineered spark of life, fashioned through arcane procedures and proto-scientific ambition. What begins as a playful experiment soon spirals, as many “Faustian bargain” tales do, into cosmic tragedy and overdetermined consequence.11‟This nature–artifact ambiguity provides the scene for the Homunculus’s demise, as its creators underestimate the complex entanglements that arise when an embodied intelligent creature is manufactured in vitro rather than allowed to emerge as a lived Being-in-the-world„

Goethe’s Homunculus embodies the intoxicating promise of creating autonomous, thinking creatures: an act propelled by the noble pursuit of knowledge yet haunted by unbridled ambition.

The Homunculus is a human-like mortal creature engineered in a vessel; it is meant to demonstrate technical mastery over the conditions of life itself. Yet in this daring inventive process, Dr. Faust and Wagner unleash a being whose ontological status remains unresolved, neither fully artificial nor natural.12 This nature–artifact ambiguity provides the scene for the Homunculus’s demise, as its creators underestimate the complex entanglements that arise when an embodied intelligent creature is manufactured in vitro rather than allowed to emerge as a lived Being-in-the-world.

A similar ambiguity to Goethe’s Homunculus also shapes the rhetorical constraints underpinning contemporary efforts in neuromorphic computing (see: Kudithipudi et al., “Neuromorphic Computing at Scale”) and in generative models inspired by the structure and function of the human brain.13 Engineers attempt to simulate forms of agency or artistic creativity, yet these systems operate without meaningful embodiment, historicity, or attunement to the lifeworld. In this sense, modern AI laboratories reprise Dr. Faust’s workshop: technical mastery produces machines designed to approach forms of agentic superintelligence, yet their ontological standing remains unresolved, suspended between instrument and conscious agent.

The Long Arc of Artificial Imaginaries

At first glance, these mythologies seem remote from the technical architectures of AI. Yet they continue to furnish the collective memory structures through which modern societies interpret algorithmic advances. When present-day incarnations of “Dr. Faust” and “Yan Shi the master artificer” prophesize an Artificial Superintelligence that will either rescue or doom humanity; when policymakers fear losing the “geopolitical AI competition” or letting thinking machines “escape” human control; when news headlines alternately promise a “fully automated space utopia” or warn of an impending “AI apocalypse,” these narratives are drawing upon ancient rhetorical and ritological foundations that long predate our contemporary machines.More importantly, these rhetorical antecedents reveal something essential. Stories of automata and artificial beings recur across cultures because they reflect our enduring fascinations and ethical deliberations with agency, artificiality, embodiment, and consciousness of machine-creatures. They also disclose our tacit obsessions and fear of human vulnerability, of losing control, as well as our fantasy that something molded by human hands might one day transcend the limits of flesh, perhaps even mortality itself.

Before we speak of ChatGPT or autonomous vehicles, we must recognize that contemporary AI discourse is built upon an ancestral sensorium of myth, ritual, and desire. Our task, then, is to trace how these rhetorical and material developments converge, mutate, and reappear within modern discourses surrounding artificial intelligence.

This brings us to the need for conceptual clarity. In Part 2 of this meditation, we will demystify the concept of AI through four acts: examining how discourse surrounding thinking machines and the algorithmic lifeworld evolved with shifting technological scenes, from the era of procedural automation to the machine learning turn, then the rise of the generative models, and finally the accelerating push toward ASI.

To be continued…

Footnotes

1 Christian Fuchs, “Robots and Artificial Intelligence (AI) in Digital Capitalism,” in Digital Humanism: A Philosophy for 21st Century Digital Society, 111–154 (Emerald Group Publishing Limited, 2022).

2 Keren Wang, “An Interdisciplinary Historical Overview,” in Legal and Rhetorical Foundations of Economic Globalization: An Atlas of Ritual Sacrifice in Late Capitalism (London: Routledge, 2019), 31–52.

3 Joshua D. F. Hooke, “Martin Heidegger’s Concept of Understanding (Verstehen): An Inquiry into Artificial Intelligence,” Analecta Hermeneutica 15 (2023).

4 Keren Wang, Legal and Rhetorical Foundations of Economic Globalization: An Atlas of Ritual Sacrifice in Late-Capitalism (New York: Routledge, 2019), 13–14. Available at: doi.org/10.4324/9780429198687.

5 Classical Chinese Text Project, “《列子·湯問》.” Accessed Month Day, Year. https://ctext.org/liezi/tang-wen/zh.

See also, Chopra, Samir, and Laurence F. White. A legal theory for autonomous artificial agents. University of Michigan Press, 2011.6 Genevieve Liveley, “Talos: Overcoming the AI Monster?,” in Artificial Intelligence in Greek and Roman Epic (2024), 105.

7 Adrienne Mayor, Gods and Robots: Myths, Machines, and Ancient Dreams of Technology (2018), 1–304.

8 Roman V. Yampolskiy and M. S. Spellchecker, “Artificial Intelligence Safety and Cybersecurity: A Timeline of AI Failures,” arXiv preprint arXiv:1610.07997 (2016).

See also, Norman Friedman, “Status-6: Russian Drone Nearly Operational,” U.S. Navial Institute Proceedings 145, no. 4 (April 2019), https://www.usni.org/magazines/proceedings/2019/april/status-6-russian-drone-nearly-operational.

Longpre, Shayne, Marcus Storm, and Rishi Shah. "Lethal autonomous weapons systems & artificial intelligence: Trends, challenges, and policies." Edited by Kevin McDermott. MIT Science Policy Review 3 (2022): 47-56.

9 Cathy S. Gelbin, “The Golem: From Enlightenment Monster to Artificial Intelligence,” Bulletin of the German Historical Institute 69, no. 2022 (2021): 79–94.

10 Keren Wang, “Legal and Ritological Dynamics of Personalized ‘Pillars of Shame’ in Chinese Social Credit System Construction,” China Review 24, no. 3 (2024): 179–206. https://www.jstor.org/stable/48788933.

11 Karamjit S. Gill, “Faust, Freud, Machine: Encounters and Performance,” AI & Society 28, no. 3 (2013): 253–255.

12 Francesco Rossi, “Hyperrealities and Simulacra in Goethe’s Faust,” Between 12, no. 24 (2022): 441–459.

13 Daniel Lim, “Brain Simulation and Personhood: A Concern with the Human Brain Project,” Ethics and Information Technology 16, no. 2 (2014): 77–89. doi:10.1007/s10676-013-9330-5.